Dask on the Cloud

No complex K8s config. Docker not required. Parallel Python that just works.

Your Python Code, Just Bigger

Scale your pandas, NumPy, and scikit-learn code from your laptop to the cloud.

Dask DataFrame for tabular data

- Mimics the pandas API for minimal code changes

- Easily scale from 10 GiB to 100 TiB

- Faster than Spark and easier too

- Access your data from pretty much anywhere

import coiled

import dask.dataframe as dd

# Run on the cloud

cluster = coiled.Cluster(n_workers=10)

client = cluster.get_client()

# Read from S3/Snowflake/Delta Lake

df = dd.read_parquet("s3://coiled-data/uber/")

# Process data

result = df.groupby("service").tip_amount.mean()

import coiled

import dask.dataframe as dd

# Run on the cloud

cluster = coiled.Cluster(n_workers=10)

client = cluster.get_client()

# Read from S3/Snowflake/Delta Lake

df = dd.read_parquet("s3://coiled-data/uber/")

# Process data

result = df.groupby("service").tip_amount.mean()

Dask Deployment Made Simple

Focus on your data science, not infrastructure. Coiled handles the heavy lifting.

Deploy Dask clusters without the complexity

- Skip setting up Kubernetes, node pools, and YAML configs

- No need to manage Dask Gateway or Cloud Provider

- Deploy to any region on AWS, GCP, or Azure instantly

- Access GPUs, ARM instances, and any VM type in one line

import coiled

# Deploy a powerful Dask cluster in one command

cluster = coiled.Cluster(

n_workers=100,

worker_cpu=8,

arm=True,

region="us-east-1",

spot_policy="spot_with_fallback",

)

client = cluster.get_client()import coiled

# Deploy a powerful Dask cluster in one command

cluster = coiled.Cluster(

n_workers=100,

worker_cpu=8,

arm=True,

region="us-east-1",

spot_policy="spot_with_fallback",

)

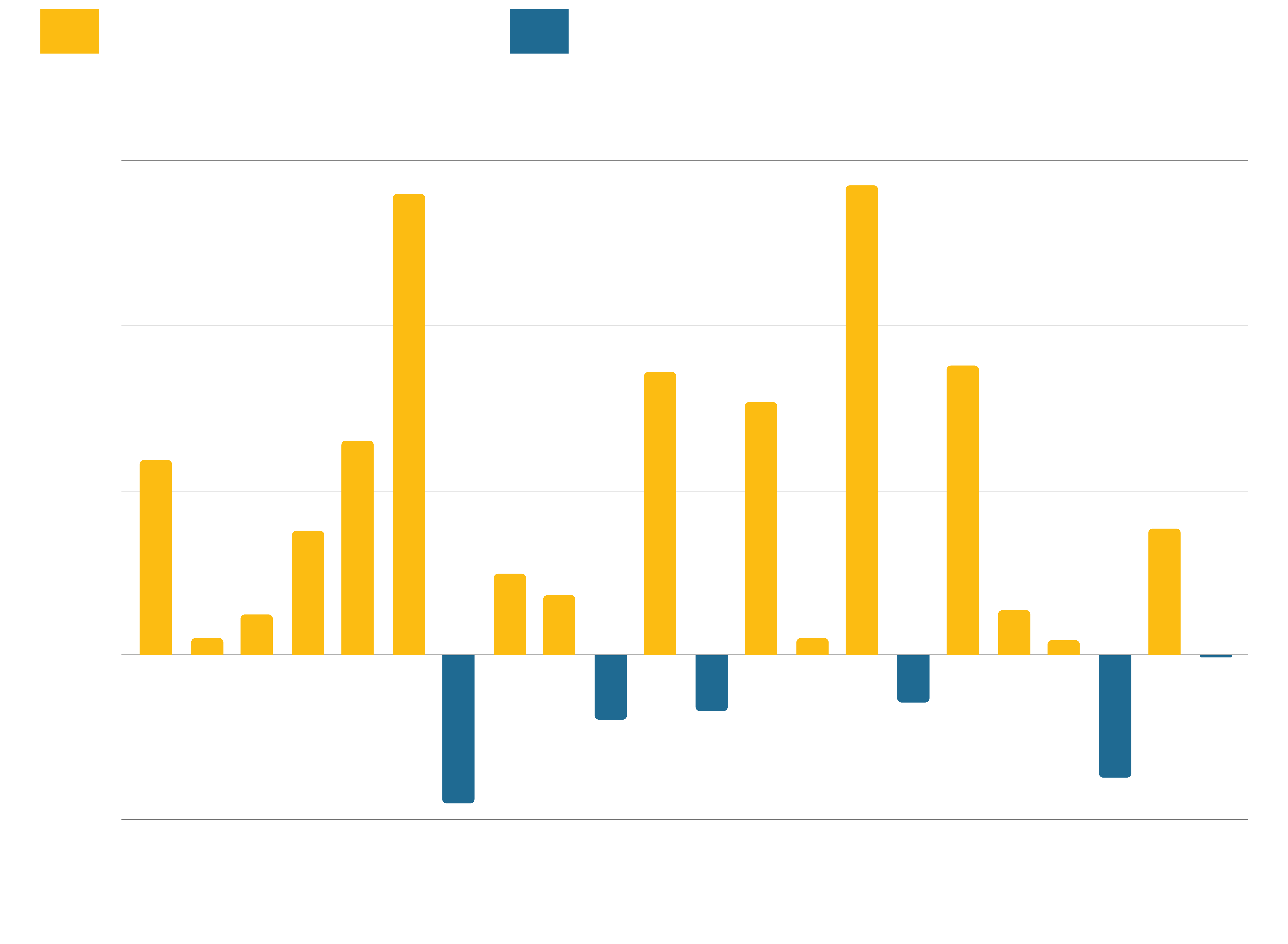

client = cluster.get_client()Faster than Spark

... and less painful too!

Dask DataFrame on Coiled easily beats Apache Spark on standard benchmarks like TPC-H.

And your sanity remains intact.

- Twice as fast, on average

- Doesn't require intense configuration

- Easier to debug (unless you love the JVM)

- Easy to scale with Coiled (and cheaper than Databricks)

Ad tech at scale

Learn how NextRoll cut costs by 70% switching from EMR to Coiled. Processing 50 billion daily ad auctions with millions of products while eliminating the painful disconnect between development and production.

From Spark complexity to Python simplicity.

- Replaced EMR + Spark with Dask for 70% cost savings

- Python-native stack eliminated dev-to-prod disconnect

- Simplified debugging from JVM dumps to Python exceptions

- Orchestration upgrade from Airflow to Dagster

- Secure data processing within their AWS VPC

Trusted by Data Teams Worldwide

From startups to Fortune 500s, teams choose Dask on Coiled

"We converted all our code from pandas to Dask, then thought we'd have to worry about scaling. We signed up for Coiled, added two or three lines of code and the cluster spun up. Everything just worked."

Jack Solomon

Co-founder, Guac

"The ability to just point Coiled at a Docker image and have it just work is amazing. There's zero installation involved. It's just one function call and it's great."

Matt Plough

Software Engineer, KoBold Metals

"Data privacy was non-negotiable—our customer data couldn't leave our AWS VPC. Coiled's approach keeps everything in our environment. We fell in love with that workflow."

Asif Imran

Senior Staff ML Engineer, NextRoll

"Coiled honestly was a breath of fresh air. The seamlessness of prototyping locally and then that environment just exporting itself up to a cluster was one of the biggest things that made me go, 'This is a really good product.'"

Paul Naidoo

Data Scientist, EOLAS Insight

FAQ

Dask is parallel computing for Python.

It provides familiar pandas and NumPy APIs that scale from laptops to clusters. You can:

- Process datasets larger than memory

- Parallelize existing Python code with minimal changes

- Scale machine learning workloads across multiple machines

- Analyze geospatial data at any scale

The key advantage is that you don't need to learn new APIs or rewrite your code.

Dask is Python-native, Spark is JVM-based.

Key differences:

- Language: Dask is pure Python, Spark is Scala with Python bindings

- APIs: Dask uses pandas/NumPy APIs, Spark has its own DataFrame API

- Ecosystem: Dask integrates natively with the Python ecosystem

- Performance: Dask often outperforms Spark on Python workloads

- Flexibility: Dask supports task-based parallelism for complex algorithms

See our detailed comparison for more information.

Multiple deployment options to fit your needs.

Coiled is the easiest way to run Dask on the cloud, but there are also a number of open-source options:

- Dask Cloud Provider: Direct VM management on AWS, GCP, Azure

- Kubernetes: Use Dask Gateway or Helm charts

- Yarn: Deploy on EMR, Dataproc with Dask-Yarn

For local development, you can also start with LocalCluster, then scale to the cloud with coiled.Cluster.

Each option uses the same Dask APIs, so you can switch deployment methods without changing your code.

Yes, with minimal changes.

Dask is designed as a drop-in replacement:

# Change this:

import pandas as pd

df = pd.read_parquet("data.parquet")

# To this:

import dask.dataframe as dd

df = dd.read_parquet("data.parquet")

# Change this:

import pandas as pd

df = pd.read_parquet("data.parquet")

# To this:

import dask.dataframe as dd

df = dd.read_parquet("data.parquet")

Most pandas operations work identically. The main difference is calling .compute() to trigger execution when you want results.

If you're new to Dask we recommend the following resources:

- Dask Tutorial: Official Dask learning materials

- Dask Documentation: Complete reference guide

- Dask Forum: Great place to answer questions and get help

Dask is open-source, Coiled is a managed service.

- Dask: Open-source parallel computing library

- Coiled: Commercial platform for deploying Dask in the cloud (founded by Dask's creator)

Think of it like:

- Kubernetes (open-source) vs. Google GKE (managed service)

- Postgres (open-source) vs. AWS RDS (managed service)

You can use Dask anywhere: your laptop, your own cloud infrastructure, or through managed services like Coiled. The Dask documentation recommends Coiled for cloud deployment.

Dask excels at parallelizable Python workloads.

Great for:

- Data processing: ETL, aggregations, time series analysis

- Machine learning: Model training, hyperparameter tuning, feature engineering

- Scientific computing: Climate modeling, geospatial analysis, simulation

- Financial analysis: Risk modeling, backtesting, portfolio optimization

Less ideal for:

- Single-threaded algorithms that can't be parallelized

- Real-time streaming (though it can handle batch processing of streams)

- SQL-heavy workloads (consider specialized databases)

Get started

Know Python? Come use the cloud. Your first $25 of usage per month is on us.

$ pip install coiled

$ coiled quickstart

Grant cloud access? (Y/n): Y

... Configuring ...

You're ready to go. 🎉$ pip install coiled

$ coiled quickstart

Grant cloud access? (Y/n): Y

... Configuring ...

You're ready to go. 🎉